Platform and engineering teams

Teams building agents, copilots, or automation systems that can call tools, change data, modify repositories, or trigger business actions.

Publications

TNT Intelligence is building the operational layer between governance, runtime control, and trusted autonomous execution.

This area shows work that already exists. TNT is not only publishing abstract positioning around responsibility and decision architecture, but concrete artifacts for governed delegation, runtime enforcement, integration, and trusted autonomy.

The result is not another agent-orchestration stack. The focus is the harder question behind consequential AI systems: under which conditions may a system actually act, and how is that enforced in practice?

As the AI Act moves governance questions into real implementation pressure, organizations need more than policy documents. They need operating models that classify use cases, assign responsibility, constrain execution, and preserve auditability.

That is the gap TNT is addressing here, and the architecture follows a clear sequence: GTAF defines the governance model, Runtime enforces it, SDK carries it into real systems, and ITA extends the same logic into trusted autonomy rather than improvised orchestration.

GTAF defines explicit artifacts for scope, decision authority, responsibility, validity, and intervention instead of relying on prose or post-hoc interpretation.

Jump to section

GTAF Runtime turns evaluated governance outputs into executable allow/deny decisions. That is where governance stops being descriptive and becomes operational.

Jump to section

GTAF SDK reduces the adoption gap between governance artifacts and working software systems, without mutating the semantics of the runtime core.

Jump to section

ITA carries the same logic into governed execution spaces, visible capability surfaces, and one audited enforcement boundary for real external effects.

Jump to section

Teams building agents, copilots, or automation systems that can call tools, change data, modify repositories, or trigger business actions.

Decision-makers who need AI systems that do more than prototype well. They need systems that can act productively without dissolving accountability.

Organizations facing AI Act obligations around risk classification, oversight, documentation, logging, robustness, and controllability in consequential use cases.

Execution rights are tied to governed context and validity, not to the permanent label of an agent or service account.

The architectural aim is to constrain real effects before they happen and keep attempted, denied, and executed actions auditable.

Planning and tool routing are useful, but they are not authority. TNT's work focuses on the control plane beneath execution.

AI systems that review documents, propose approvals, trigger internal actions, or interact with business APIs where accountability cannot be hand-waved away.

Environments where authority, traceability, intervention, and scope discipline matter more than raw automation speed.

Release agents, repo tooling, CI/CD automation, or operational assistants that should act usefully without quietly accumulating blanket privileges.

Products that move beyond chat or recommendations and must be designed so real effects remain bounded, reviewable, and intervention-ready.

The architectural layer for systems that must remain useful, governable, and auditable while acting with real-world effect.

Infrastructure for Trusted Autonomy

Public architecture model

A runtime architecture for systems that must act with real effect while staying governable. ITA extends GTAF into execution spaces, capability exposure, enforcement, and audit.

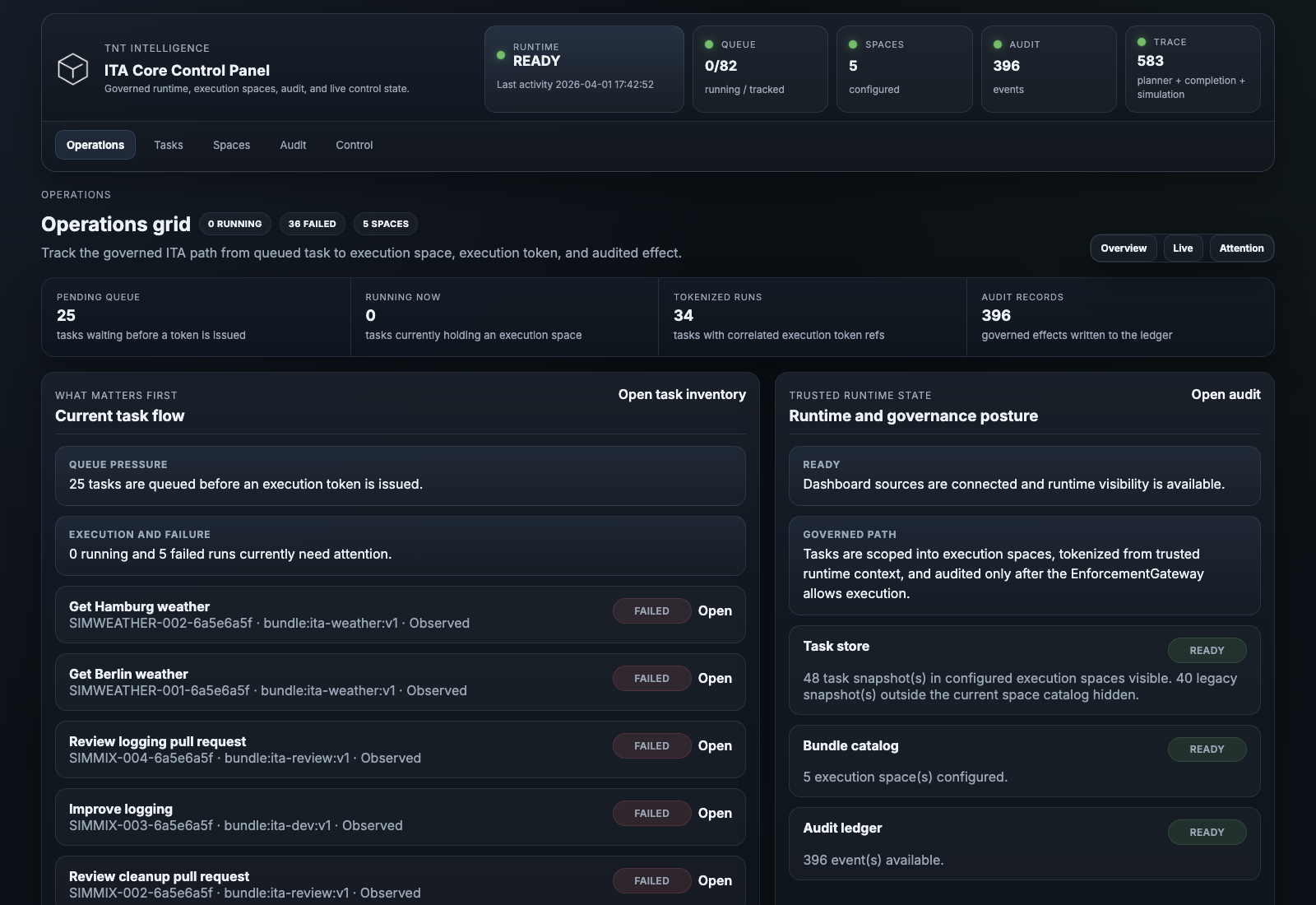

ITA Dashboard

Shown here is an example control panel built on top of ITA. The core is the architecture underneath: execution spaces, capability visibility, enforcement, and audit derived from GTAF and extended into runtime.

This becomes architecturally interesting where GTAF turns into execution spaces, enforcement, and audit at runtime.

Understand execution spaces, enforcement, and auditThe governance layer for classifying risk, defining authority, and making delegated action structurally legible.

Public normative reference

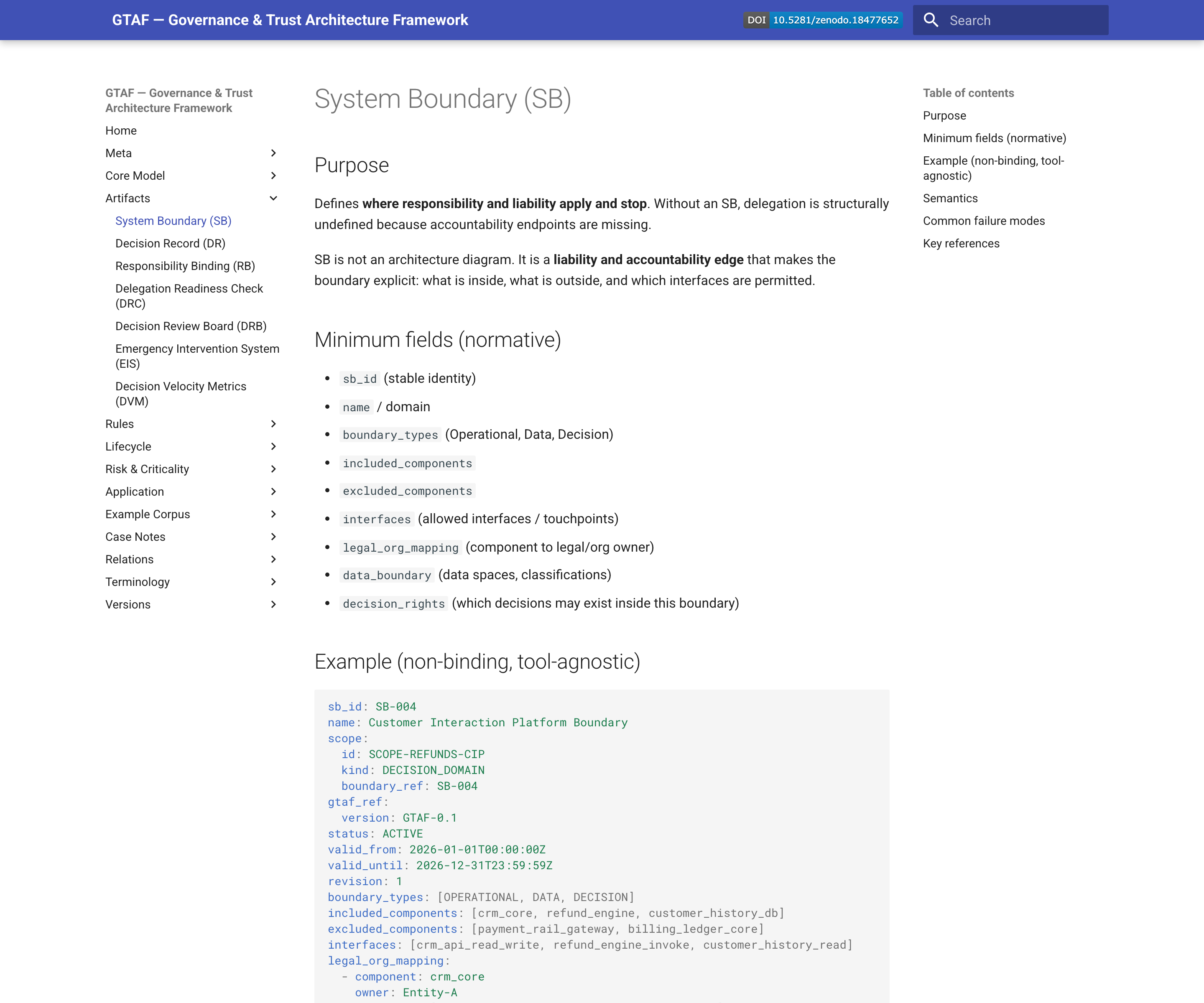

A governance framework for AI systems that may act, delegate, or produce consequential effects. GTAF turns scope, authority, responsibility, and validity into structured operational artifacts.

GTAF Reference

The public reference already shows GTAF as a concrete artifact system: governance structured into boundaries, records, bindings, lifecycle logic, and explicit permission states.

This becomes useful where governance stops being prose and turns into explicit artifacts, decision logic, and validity states.

Explore the GTAF artifact modelThe control plane that turns governance decisions into executable system behavior and adoptable integration paths.

Public runtime core · reference implementation available

The enforcement core that turns evaluated governance outputs into executable allow/deny decisions. The public implementation demonstrates the contract, but the runtime model is broader than one language.

The interesting step here is where evaluated governance artifacts become binary execution decisions with explicit reason paths.

See how governance becomes runtime enforcementPublic integration layer · reference implementation available

The adoption layer that helps real systems load artifacts, shape execution context, and call the runtime cleanly. The public implementation is one concrete path, but the integration model is not language-specific.

The key question here is how governance artifacts and runtime contracts arrive in real systems without semantic drift.

Understand the GTAF integration layerTranslate policy and regulatory pressure into decision layers, accountable roles, explicit scope boundaries, and concrete governance artifacts.

Shape the boundary between AI proposals and real external effects so that systems can act usefully without acting beyond mandate.

Support teams that need more than orchestration: product, platform, and architecture work for systems that must stay governable in operation.

From GTAF through Runtime and SDK to ITA, TNT already brings public reference work, runtime building blocks, and applied architecture into these questions. The conversation does not have to start at theory.

When these questions move from interest to implementation, TNT is a serious conversation partner.

Discuss your context